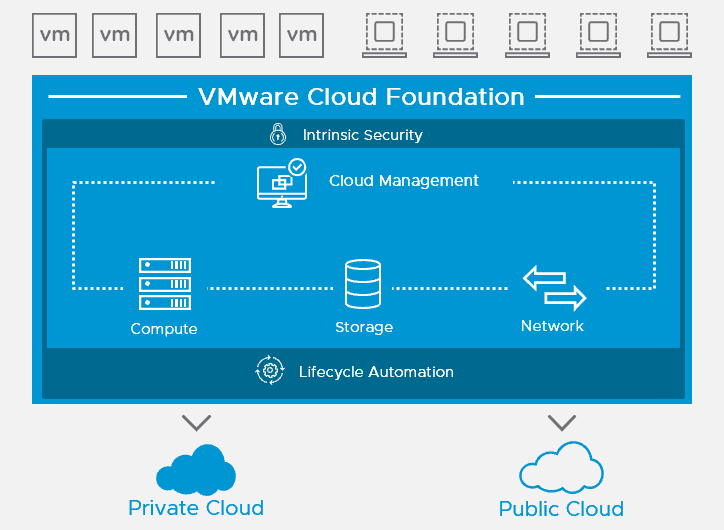

It’s been a while since my last blog post and that is mainly because I was working on a couple interesting engagements where I was co-designing and deploying VMware Cloud Foundation 3.0 for a number of our customers. If you are not familiar with VCF, I suggest having a look at the product page on VMware website.

It’s been a while since my last blog post and that is mainly because I was working on a couple interesting engagements where I was co-designing and deploying VMware Cloud Foundation 3.0 for a number of our customers. If you are not familiar with VCF, I suggest having a look at the product page on VMware website.

Since VCF 3.0 is a fairly new and emerging VMware product, I will share my experience in a small how-to blog series to give you an example on the VCF 3.0 requirements and preparation but also to give you an idea how it is deployed and configured. VCF 3.0 currently only supports greenfield deployments.

Last update: 24 December 2018

VCF 3.0 Requirements and Preparation

Reading List

Before you start the deployment, you should read the following:

- Release notes and patch release notes

- Planning and Preparation guide

- Architecture and Deployment guide

- Operations and Administration guide.

- Site Protection and Disaster Recovery (if you planning for it)

Documentation mentioned above can be found on the VMware Docs website.

Network

In contrast to previous release, VCF 3.0 release introduces a “Bring-you-own-Network” concept where customers can use the network switching infrastructure with hardware of their choice.

- Since VCF 3.0 supports greenfield deployments only, consider using Jumbo Frames on all VLANs. At a minimum, MTU of 1600 is required on the NSX VTEP VLAN. Make sure that Jumbo Frames are configured end-to-end.

- No ethernet trunking technology (LAG/VPC/LACP) is being used between the ESXi host NIC’s facing the physical switches.

- VLAN’s for Management Network, vMotion, vSAN, and NSX VTEP are created and tagged to all host ports. Each VLAN is 802.1q tagged.

- IP address ranges, subnet mask, and a reliable L3 default gateway are defined for the following VLAN’s

- Management Network

- vMotion Network

- vSAN Network

- VXLAN Network

- vRealize Suite Network (optional, this network will be created if you decide to deploy vRealize Automation or vRealize Operations Manager from the SDDC Manager)

- Define 2 network pools. One for vSAN network and one for vMotion network that will be used to automate the IP configuration and assignment of the VMkernel ports on the ESXi hosts.

- Verify that a DHCP service with an appropriate scope size (2 IP addresses per ESXi host) is configured for the VTEP VLAN. Remember to size the scope for future growth.

- Acquire the hostnames and IP addresses for the following external services prior the deployment:

- NTP

- Active Directory

- DNS

- Certificate Authority

- Above services must to be accessible and resolvable by IP Address and fully qualified domain name.

ESXi Hosts (management)

- You require at least 4 ESXi hosts for the VCF bring-up.

- Verify the ESXi hosts and its components (BIOS, RAID Controllers, SSD’s etc.) against vSAN HCL. Identical hardware is highly recommended.

- Verify if the hardware matches the minimum hardware requirements for the management cluster.

- Register all Management Network IP address of all ESXi hosts is DNS. Verify that the IP addresses are resolvable (forward and reverse).

- Provision the ESXi hosts with image build version specified in the VCF release notes under the BOM section. Use the vendor branded image if required. You can choose to provision the hosts manually or use the imaging service (VIA) on the Cloud Builder.

- If you provision the hosts manually, configure the standard settings like IP address, root password, DNS etc. as you would normally do on any ESXi host.

- Start the TSM-SSH service each ESXi host and set the policy to “Start and stop with host”.

- Configure the NTP server on each ESXi host, start the service and set the policy to “Start and stop with host”.

- Verify that each ESXi host is running a non-expired license. You can use the evaluation license.

- Verify that only 2 physical NIC’s are presented to the ESXi host. VCF 3.0.x currently only supports 2 physical NIC’s per ESXi host.

- Verify that one physical NIC is configured and connected to the vSphere standard switch and the second physical NIC is not configured and not connected.

- Configure the Management Network and the VM Network portgroups with the same VLAN ID on every ESXi host.

- If you are using Jumbo Frames, verify the MTU of the following components

- Physical switches

- Virtual switches

- Port groups

- VMkernel ports

- Double check the Jumbo Frames connectivity with the vmkping command combined with -d and -s switches. Example: # vmkping -I vmk1 -d -s 8972 10.1.1.10

- Verify that the caching and capacity disks are eligible for vSAN. Run the vdq -q command on the ESXi hosts and check if the naa.xxxxxx disk identifier displays “Eligible for vSAN”. Delete any existing partitions if necessary.

- If you are deploying All-Flash vSAN cluster, make sure your vSAN capacity disks are marked with the tag “capacityFlash” to be able to create disk groups.

- Verify that the health status is “healthy” and without any errors on every ESXi host.

Cloud Builder

VCF 3.0.x uses Cloud Builder virtual appliance for the bring-up process. It is an OVA and you can download it from My VMware along with the Cloud Builder Deployment Parameter Guide.

- Cloud Builder virtual machine requires 4 vCPUs, 4 GB of Memory, and 350 GB of storage.

- Cloud Builder can be deployed on a laptop running VMware Workstation or Fusion. A dedicated ESXi hosts is also supported. Both options require network connection to the same network as the Management Network interface of the ESXi hosts.

- Deploy the Cloud Builder as any other OVA and provide the following settings during the setup:

- IP address

- Subnet Mask

- Default Gateway

- Hostname

- DNS servers

- NTP servers

- Password

- Once the Cloud Builder has been deployed, you can log in and download the Cloud Builder Deployment Parameter Guide (vcf-ems-template.xlxs) file which is basically the same file that you can download from My VMware.

Once every requirement is in place, you can proceed with the VCF 3.0 bring-up phase.

Cheers!

– Marek.Z

Also if you are deploying All-Flash vSAN clusters, make sure your vSAN capacity disks are marked with the tag ‘capacityFlash’ to be able to create disk groups. Check the release notes for the correct esxcli command to do this, https://docs.vmware.com/en/VMware-Cloud-Foundation/3.0.1/rn/VMware-Cloud-Foundation-301-Release-Notes.html

Thanks Erik. Added to the ESXi host requirements.