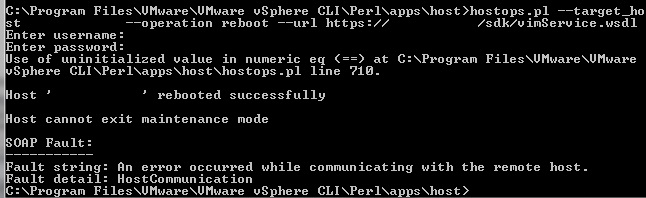

How to: Backup and restore ESXi configuration using vMA

A quick blog post about backing up and restoring the configuration of your ESXi hosts. It will shorten your recovery time when something goes wrong during an upgrade or in […]

A quick blog post about backing up and restoring the configuration of your ESXi hosts. It will shorten your recovery time when something goes wrong during an upgrade or in […]

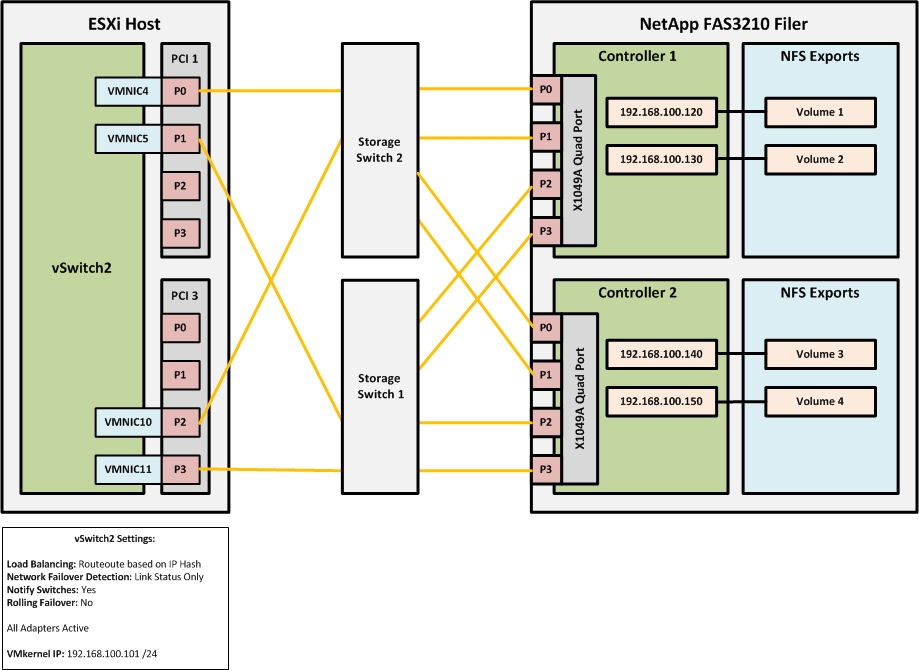

When configuring a NFS storage network at one of our customers some time ago, I noticed that the ESXi host wasn’t utilizing all NICs assigned to the NIC team for […]

Here is a quick step-by-step guide on how to install the Dell OpenManage software on an ESXi host using the VMware vSphere Command Line interface (vSphere CLI) or the VMware […]

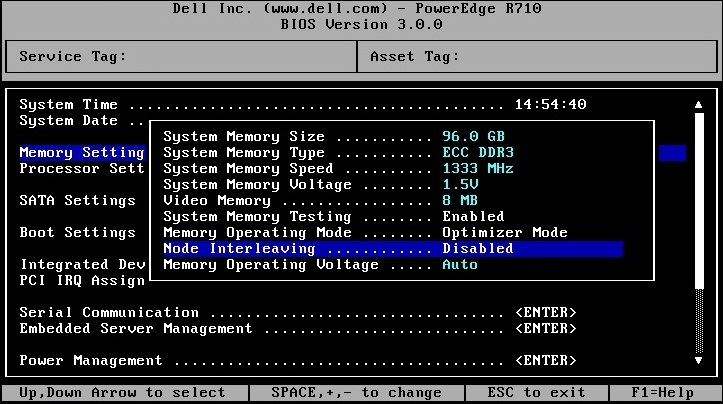

Recently, I was involved in a big infrastructure refreshment project for one of our customers across different locations in Europe. The old hosts were replaced with the brand new Dell […]

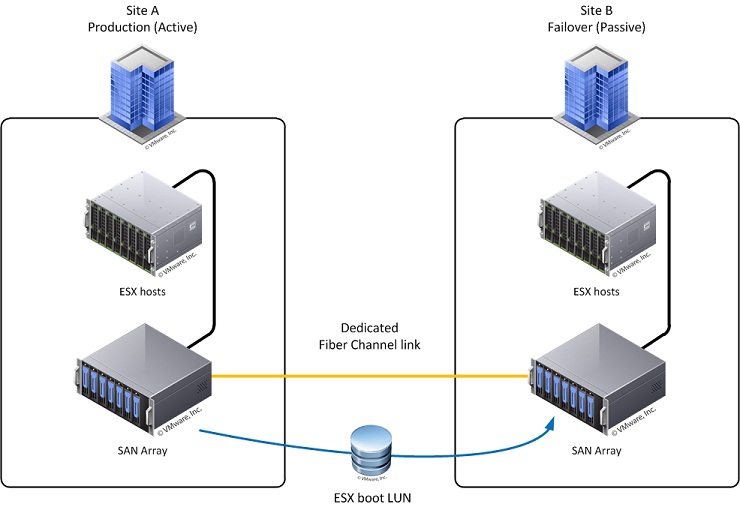

Is it possible to boot an ESX 4 host from a LUN that has been “failed over” to another physical location? Yes, it is possible but there are some serious […]

Copyright © 2024 | DefaultReasoning.com